The ethics of artificial intelligence

Digital technologies hold great promise for the economy and society. But some recent developments, such as face recognition, also present ethical dilemmas. The challenges are immense for Switzerland, which is one of the leading developers of artificial intelligence (AI).

Digitalisation has led to an explosion of new ethical challenges: the loss of jobs due to automation, data protection issues, face recognition, deepfake videos, and most disturbingly, the misuse of AI and robot technologies.

The most recent example is the appearance in Autumn 2022 of ChatGPT, an AI-based chatbot that has caused worries in various sectors due to its ability to do things like code and fix mistakes in computer programmes.

ChatGPT also raises various ethical questions, especially since it can write marketing texts, detailed reports, and even whole books – misuse is inevitable, and teachers are already concerned.

It is hard to imagine what would happen if AI fell into the wrong hands. If you take the issue of “killer robots”, for example, the international community cannot agree on whether to introduce strict rules to control their use. Talks at the United Nations in Geneva on regulating lethal autonomous weapons have so far had limited results.

Such weapons do not yet exist, but campaigners say they could be deployed on the battlefield in just a few years given the rapid advances and spending on AI and other technologies.

More

Killer robots: ‘do something’ or ‘do nothing’?

Switzerland has made huge strides in the field of AI – software which teaches itself to think and make decisions like a human, and which learns by processing huge amounts of data.

Numerous Swiss start-ups are developing so-called intelligent systems, in the form of robots, apps or digital assistants, intended to make our lives easier. But the companies face the constant ethical dilemma of where to draw the line when it comes to selling their technologies. This predicament is particularly acute for those involved in researching technologies and selling them. Swiss universities, for example, participate in projects funded by the US military – from aerial surveillance cameras to autonomous reconnaissance drones.

More

A special relationship: The US military and Swiss universities

Switzerland takes a position

Switzerland strives to be at the forefront of the movement to shape ethical standards for the use of AI. But what does Switzerland have to do to become an AI pioneer? One interesting initiative is the creation of the “Swiss Digital Trust Label” aimed at boosting user confidence in new technologies. The idea is to give users more information on digital services, thereby creating transparency and ensuring respect for ethical values. “We want to help guarantee that ethical and responsible behaviour also becomes a competitive advantage for businesses,” says Niniane Paeffgen, director of Swiss Digital Initiative, the organisation behind the “Swiss Digital Trust Label”.

More

How do you build trust in new technologies?

The need for regulations and clear definitions of the ethical limits is undisputed. Switzerland’s Federal Council (executive body) has set up an interdepartmental working group on Artificial Intelligence which adopted AI guidelines at the end of November 2020.

“It is important that Switzerland utilises the opportunities of AI to the full,” says the working group’s recommendation to the Federal Council. “We have to establish the best possible framework conditions that allow Switzerland play a leading role in research, development and application of AI. At the same time, the risks have to be addressed and effective measures introduced.”

More

Ethical artificial intelligence: Could Switzerland take the lead?

Different perceptions of ethics

There has been an increasing proliferation of voices calling for a globally binding standard. A European Commission’s expert group on artificial intelligence presented a set of Ethics GuidelinesExternal link in late 2018. In June 2023 the European Parliament agreed to a first draft of the law on artificial intelligence (The Artificial Intelligence Act)External link.

In December 2023 the European Parliament, the European Commission and the member states finally agreed on a binding set of rules for the use of artificial intelligence. It is the first of its kind in the world.

The regulation aims to ban AI systems that pose an “unacceptable” risk to citizens and democracy. This includes, for example, systems that use sensitive personal data for psychological manipulation, social classification and racial, sexual and religious profiling.

The EU described the decision as a “historic moment”External link. But where does this leave Switzerland, which as a non-member was unable to have a say in the regulation of AI?

Numerous other guidelines and expert opinions exist on the issue. However, a systematic analysisExternal link conducted by the Health Ethics and Policy Lab at the federal technology institute ETH Zurich found that no single ethical principle was common to all of the 84 reviewed documents on ethical AI.

However, five principles were mentioned in more than half of the 84 reviewed documents: transparency, justice and fairness, preventing harm, responsibility, data protection, and privacy.

Heated discussions about AI are also reported at tech giants like Google. In 2021, the company fired two ethics experts in a dispute, in a decision that calls into question whether a moral code surrounding AI is really a priority for Big Tech.

More

What happens when Google fires its ethics expert?

A new AI research centre has just been established at ETH Zurich, which serves as a hub for various research departments to work closer together. Alexander Ilic, head of the centre, says he wants to put people at the centre of its AI work.

More

‘Artificial intelligence won’t replace humans’

“The person who designs something new carries a certain responsibility. Hence, it is important to us that we help shape this dialogue and let our European values influence the development of AI applications,” Ilic said in an interview in November.

The most important argument in favour of AI is probably that it makes our lives easier and saves time; programmes like ChatGPT can take over monotonous tasks, for example. At best, AI can even save lives. The Swiss rescue agency Rega, is testing an autonomous rescue drone that uses AI to locate people lost in the Swiss Alps.

More

Swiss drones to the rescue!

The European Parliament’s expert group meanwhile estimates that AI can help reduce global greenhouse emissions by 1.5-4% by 2030. This would be roughly what the global aviation industry produces in greenhouse emissions.

“Transforming the energy, food and automotive industries as well as our living habits will be very challenging,” said the report by the federal working group on AI. “As a key technology, AI will play an important role in taking up these challenges.”

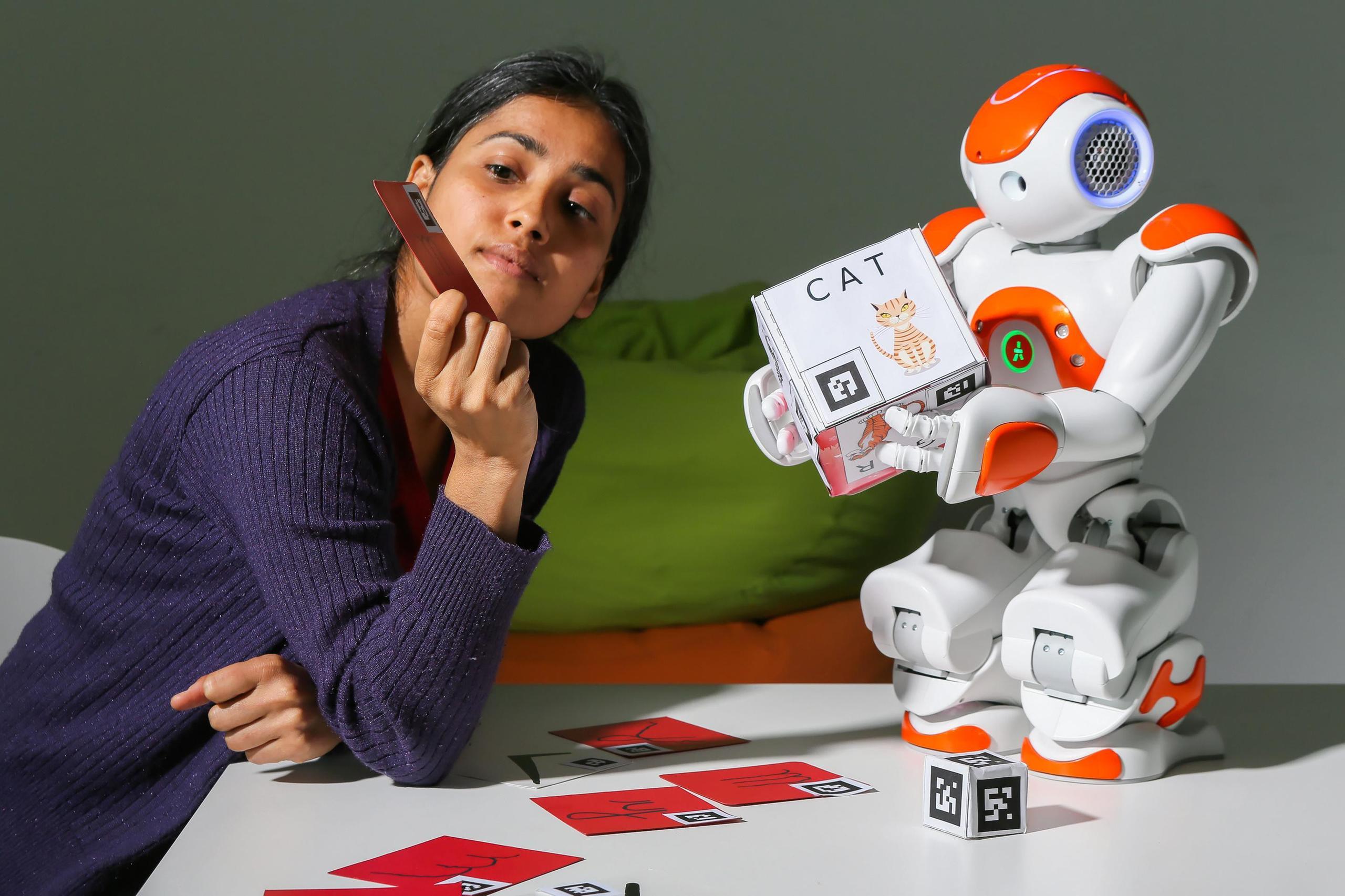

And the Covid-19 pandemic has also been influential in the field of AI, with numerous services being digitalised overnight. This led to an increase in the use of AI in our everyday lives, such as robots that can disinfect schools or hospitals.

More

Robots will help us manage Covid-19, but not in the way we think

Fear of job loss

Yet many people remain highly critical about AI. “Artificial intelligence is either the best or the worst thing ever to happen to humanity,” the British physicist Stephen Hawking once said.

Critics of “killer robots” fear that AI and robots would not only threaten jobs, but also humans. Societies are growing more and more sceptical about AI, while the industry is in the midst of a technological revolution. Big data, AI, the Internet of Things (IoT) and 3D printers have changed the skills required from employees as more simple jobs are delegated to machines.

It is likely therefore that AI will replace humans at the workplace sooner rather than later. Research has shown that automated systems can do half of the current jobs more quickly and efficiently than humans.

Swiss researcher Xavier Oberson proposes to tax the use of robots if they perform jobs that have traditionally been done by humans. He suggests that funds generated by such a tax could go towards financing social security, as well as training for the unemployed.

More

Should robots be taxed for stealing jobs?

But where do consumers stand in this debate? They often have reservations about AI applications. According to studies, they are not so concerned by AI eliminating or controlling mankind, but more about the protection of their data. For this reason, AI producers are increasingly under pressure to make products that are transparent for users.

This is one of the goals of the new ETH Zurich centre, says Alexander Ilic: “We want to show in dialogue with the public what AI can achieve. There are many tangible examples, such as what is happening in medicine and digital health which is easy to understand and brings many positive changes. We want to prove that artificial intelligence supports people and does not replace them.”

More

Switzerland, land of big pharma, tries to reel in AI startups

In compliance with the JTI standards

More: SWI swissinfo.ch certified by the Journalism Trust Initiative